Download and view pictures & videos from websites Software for bulk downloading images, videos, mp3's, and any other files. NeoDownloader is the fast and convenient solution for bulk downloading any files from any websites.It is mostly intended to help you download and view thousands of your favorite pictures, photos, wallpapers, videos, mp3s, and any other files automatically. Jul 08, 2015 Simple bulk image download extension, filter by resolution and file type, download photos from multiple tabs in one click. If you need to bulk download images from one or multiple web pages, with this extension you can: Support bulk download images from multiple tabs, you can choose: all tabs, current tab, left of current tab, right of current tab. See images that the page contains and links.

Active6 months ago

I am using wget to download all images from a website and it works fine but it stores the original hierarchy of the site with all the subfolders and so the images are dotted around. Is there a way so that it will just download all the images into a single folder? The syntax I'm using at the moment is:

Monica Heddneck1,10533 gold badges2323 silver badges5656 bronze badges

geoffs3310geoffs33105,5422020 gold badges5757 silver badges8181 bronze badges

7 Answers

Try this:

Here is some more information:

-nd prevents the creation of a directory hierarchy (i.e. no directories).-r enables recursive retrieval. See Recursive Download for more information.-P sets the directory prefix where all files and directories are saved to.-A sets a whitelist for retrieving only certain file types. Strings and patterns are accepted, and both can be used in a comma separated list (as seen above). See Types of Files for more information.1,10533 gold badges2323 silver badges5656 bronze badges

JonJon2,57322 gold badges1919 silver badges3030 bronze badges

-nd: no directories (save all files to the current directory;-P directorychanges the target directory)-r -l 2: recursive level 2-A: accepted extensions

-H: span hosts (wget doesn't download files from different domains or subdomains by default)-p: page requisites (includes resources like images on each page)-e robots=off: execute commandrobotos=offas if it was part of.wgetrcfile. This turns off the robot exclusion which means you ignore robots.txt and the robot meta tags (you should know the implications this comes with, take care).

Example: Get all

hakre.jpg files from an exemplary directory listing:164k3333 gold badges324324 silver badges643643 bronze badges

LriLri20.9k77 gold badges6969 silver badges7070 bronze badges

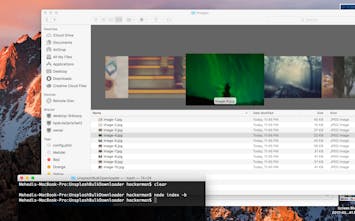

I wrote a shellscript that solves this problem for multiple websites: https://github.com/eduardschaeli/wget-image-scraper

(Scrapes images from a list of urls with wget)

ezyezy

Try this one:

and wait until it deletes all extra information

whoan6,56944 gold badges2929 silver badges4343 bronze badges

orezvaniorezvani1,44488 gold badges3131 silver badges5050 bronze badges

According to the man page the -P flag is:

-P prefix --directory-prefix=prefix Set directory prefix to prefix. The directory prefix is the directory where all other files and subdirectories will be saved to, i.e. the top of the retrieval tree. The default is . (the current directory).

This mean that it only specifies the destination but where to save the directory tree. It does not flatten the tree into just one directory. As mentioned before the -nd flag actually does that.

@Jon in the future it would be beneficial to describe what the flag does so we understand how something works.

Michael YagudaevMichael Yagudaev4,20422 gold badges3737 silver badges4646 bronze badges

The proposed solutions are perfect to download the images and if it is enough for you to save all the files in the directory you are using.But if you want to save all the images in a specified directory without reproducing the entire hierarchical tree of the site, try to add 'cut-dirs' to the line proposed by Jon.

in this case cut-dirs will prevent wget from creating sub-directories until the 3th level of depth in the website hierarchical tree, saving all the files in the directory you specified.You can add more 'cut-dirs' with higher numbers if you are dealing with sites with a deep structure.

bgoodr1,29311 gold badge1616 silver badges4040 bronze badges

hugi coapetehugi coapete

wget utility retrieves files from World Wide Web (WWW) using widely used protocols like HTTP, HTTPS and FTP. Wget utility is freely available package and license is under GNU GPL License. This utility can be install any Unix-like Operating system including Windows and MAC OS. It’s a non-interactive command line tool. Main feature of Wget is it’s robustness. It’s designed in such way so that it works in slow or unstable network connections. Wget automatically start download where it was left off in case of network problem. Also downloads file recursively. It’ll keep trying until file has be retrieved completely.

Install wget in linux machinesudo apt-get install wget

Create a folder where you want to download files .sudo mkdir myimagescd myimages

Right click on the webpage and for example if you want image location right click on image and copy image location. If there are multiple images then follow the below:

If there are 20 images to download from web all at once, range starts from 0 to 19.

wget http://joindiaspora.com/img{0..19}.jpg

Trupti KiniTrupti Kini

Not the answer you're looking for? Browse other questions tagged wget or ask your own question.

Active4 years, 1 month ago

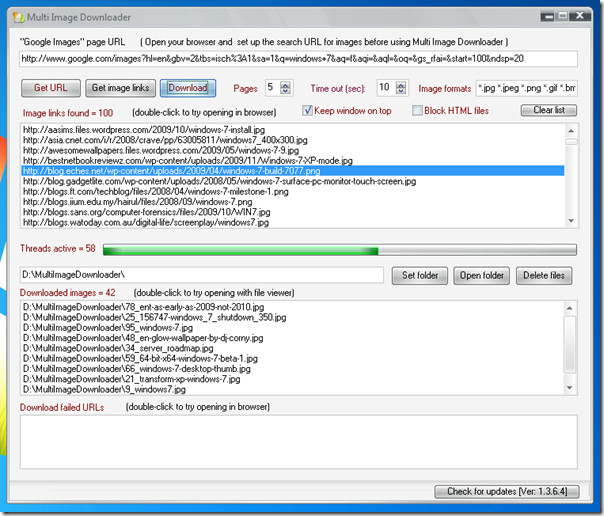

I need a program that I can give a lit of URLs to (either paste or in a file) like below and then it must be able to crawl those links and save files of a certain type, like images for example. I have tried a few spiders but not had luck.

Currently, the only way to download everything, is to open each link, then I use the 'DownThemAll!' Firefox plugin which selects all the images (or any file type) on the page and downloads them. This works page by page, but I need something similar that works a whole list of URLs.

Does anyone have any suggestions?? Thanks a lot.

PS. Could I also add that it be something fairly easy to use that has a half decent user interface and doesn't run from the command line. Thanks

Cheeky

CheekyCheeky

closed as off-topic by DavidPostill♦, mdpc, nc4pk, Steven, DaveJul 7 '15 at 13:48

This question appears to be off-topic. The users who voted to close gave this specific reason:

- 'Questions seeking product, service, or learning material recommendations are off-topic because they become outdated quickly and attract opinion-based answers. Instead, describe your situation and the specific problem you're trying to solve. Share your research. Here are a few suggestions on how to properly ask this type of question.' – DavidPostill, mdpc, nc4pk, Steven, Dave

4 Answers

There's not been any way of doing this from a browser or without downloading dodgy one-hit wonder freeware so I've written a Chrome browser extension that fits the bill.

It's called TabSave, available in the webstore here.

You can paste in a list of URLs and it'll download them, no fuss :-)

It also has the ability to download every tab open in the active window, hence the name. This is the default, just click the edit button to insert a list yourself.

It's all open source, the GitHub repo is linked in the webstore description if you want to send a pull request (or suggest a feature).

Louis MaddoxLouis Maddox

For your needs, Chrono Download Manager or TabSave can download a list of links quickly. Both are Chrome extensions, so no need to download desktop software.

And maybe this could be useful for you:

In my own experience, I prefer Chrono Download Manager because I needed to change automatically the name of the downloaded file in a BATCH-way (a list of VIDEOS from a hmm hmm... online courses) and crawling in the html code all the different videos have the same filename. So downloading it with TabSave just gives you the same name videos and you have to guess wich is the content (somewhat like '543543.mp4', '543543(1).mp4', '543543(2).mp4' and so and so).Imagine how much extra work you need to do to achieve this kind of task.

If you need quick list download of files as-is, go TabSave.If you need start to need change the name files on the run, go Chrono.

Knomo SeikeiKnomo Seikei

I know I'm gravedigging here, but I was searching for a similar program and found BitComet which works really well, you can import url's from textfiles etc.

ertanertan

Ok, I have found an application that does it beautifully.It's called Picture Ripper

Thanks for the help anyway Dudko!

Sathyajith Bhat♦53.8k3030 gold badges160160 silver badges254254 bronze badges

CheekyCheeky